Welcome and practicals

Background

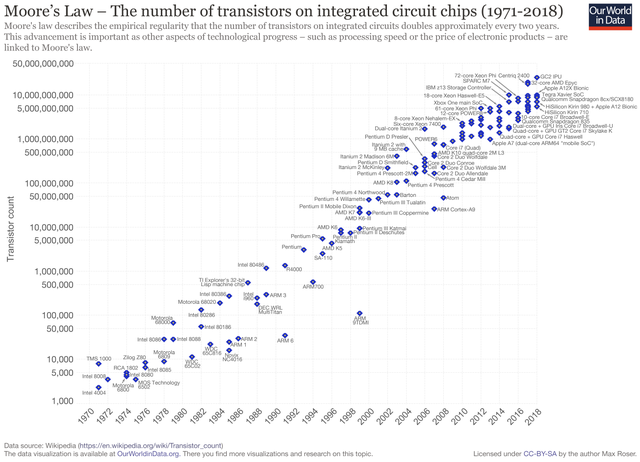

As processors develop, it’s getting harder to increase their clock speed. Instead, new processors tend to have more processing units. To take advantage of the increased resources, programs need to be written to run in parallel.

By Max Roser - https://ourworldindata.org/uploads/2019/05/Transistor-Count-over-time-to-2018.png, CC BY-SA 4.0, https://commons.wikimedia.org/w/index.php?curid=79751151

By Max Roser - https://ourworldindata.org/uploads/2019/05/Transistor-Count-over-time-to-2018.png, CC BY-SA 4.0, https://commons.wikimedia.org/w/index.php?curid=79751151

In High Performance Computing (HPC), a large number of state-of-the-art computers are joined together with a fast network. Using an HPC system efficiently requires a well designed parallel algorithm.

MPI stands for Message Passing Interface. It is a straightforward standard for communicating between the individual processes that make up a program. There are several implementations of the standard for nearly all platforms (Linux, Windows, OS X…) and many popular languages (C, C++, Fortran, Python…).

This workshop introduces general concepts in parallel programming and the most important functions of the Message Passing Interface.

The material here is derived from this lesson by Jarno Rantaharju, Seyong Kim, Ed Bennett and Tom Pritchard from the Swansea Academy of Advanced Computing. Further inspiration comes from this Python-MPI tutorial.

Prerequisites

This course assumes you are familiar with C, Fortran or Python. It is useful to bring your own code, either a serial code you wish to make parallel or a parallel code you wish to understand better.